NDPCenter Evaluation of Measuring Up

Summary of Technical Report from November 2017

National Dropout Prevention Center’s evaluation of Measuring Up reveals students experienced substantial academic progress

Overview

During the 2016–17 school year, the National Dropout Prevention Center/Network conducted a year-long study of the Measuring Up program and its impact on student outcomes measured by both Measuring Up Live 2.0 – Insight and state-administered assessments. The focus of the evaluation was to determine the effect of both the digital Measuring Up Live 2.0 program and the print Measuring Up Instructional Worktexts on both ELA and Mathematics scores. Two methods were used for this evaluation:

- quantitative data collection using pre- and post-program assessment scores and

- qualitative data collection from student and teacher questionnaires and classroom observations.

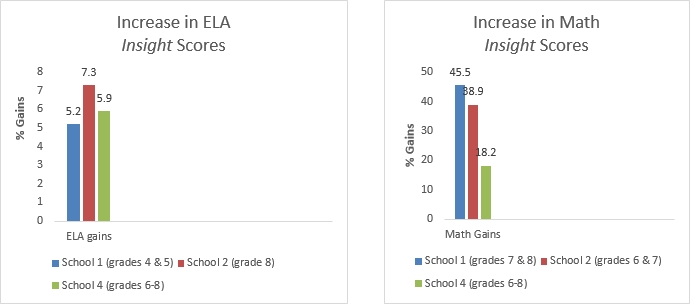

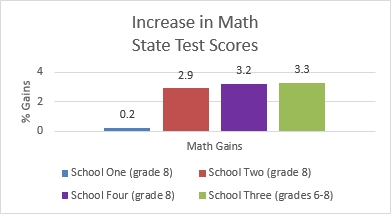

In all schools, Measuring Up Insight scores were used to measure growth in ELA and Mathematics, and state-administered assessment scores were used to measure growth in Mathematics. The results of this study revealed that the Measuring Up program positively affects learning goals and better prepares students for ELA and Mathematics assessments.

Quantitative Findings

Student scores were collected pre- and post-program to assess gains over the school year. Measuring Up Live 2.0 – Insight scores were gathered for ELA and Mathematics before implementation and near the end of the program usage as a post-assessment. A range of grade levels from 4 through 8 were assessed in ELA and Math at each school.

State-administered Mathematics assessment scores from the previous school year were used as a pre-program benchmark, and then post-program growth was measured using scale scores from the 2016–17 school year.

Qualitative Findings

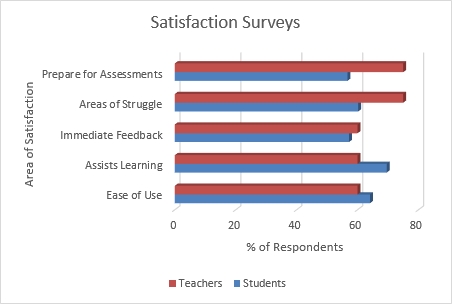

In addition to providing assessment results, evaluation of the program included classroom observations and questionnaires. Overall, both students and teachers were satisfied with their experience using Measuring Up (print and digital) and felt that they were better prepared for tests in ELA and Mathematics. Students indicated that the digital program was easy to use, especially in areas where they struggle with the material, and they appreciated the immediate feedback provided by the program. In open-ended questions, students liked that the program “helps me understand things better” and that it “tells me what I got wrong and gives me a hint, and then I can try again.” One student indicated that Measuring Up is “fun, easy, and helps my ability to do better.”

Teachers also felt that the program was easy to use and that they would benefit from additional professional development for the program, a service provided by the Measuring Up implementation team. Teachers were appreciative of the print materials when technology was unavailable due to connectivity or bandwidth. The overwhelming majority of teachers agreed that their students were better prepared for assessments because of Measuring Up.

Factors That May Predict Positive Outcomes

Implementation varied significantly from school to school and from classroom to classroom. Teacher training prior to implementation of the program was found to be a significant attribute towards success. All four schools saw gains in student achievement, regardless of implementation and training scenarios. In addition, students tended to prefer the digital version of the program. However, since access to technology in some classrooms was limited, teachers often gravitated to the print materials. The blended solution (print and digital) that Measuring Up offers promotes differentiated instruction and independent practice, and allows for various coaching techniques in the classroom. As shown by the study, struggling students displayed substantial academic growth, even with differing levels of implementation, participation, and access to technology.

Complete findings within the National Dropout Prevention Center/Network Measuring Up Evaluation Technical Report can be found on our website, www.masteryeducation.com/research.html.